The generative-AI race for convincing video is no longer theoretical — it’s practical, fast, and getting dizzyingly good. OpenAI’s Sora 2 landed as one of the headline entrants in late 2025, promising not just better-looking clips but improvements in motion, physics, and native sound. But realism is multi-dimensional. To judge Sora 2 fairly, we need to break “realistic” into measurable parts — visual fidelity, motion and physics, temporal consistency (object/character permanence), audio realism and lip-sync, controllability, and edge-case failure modes — then compare Sora 2 to peers such as Runway’s Gen series, Pika, and other cutting-edge lab models. Below I walk through those dimensions and explain where Sora 2 shines, where it still stumbles, and how it stacks up to the competition.

Visual fidelity: photoreal pixels vs cinematic impression

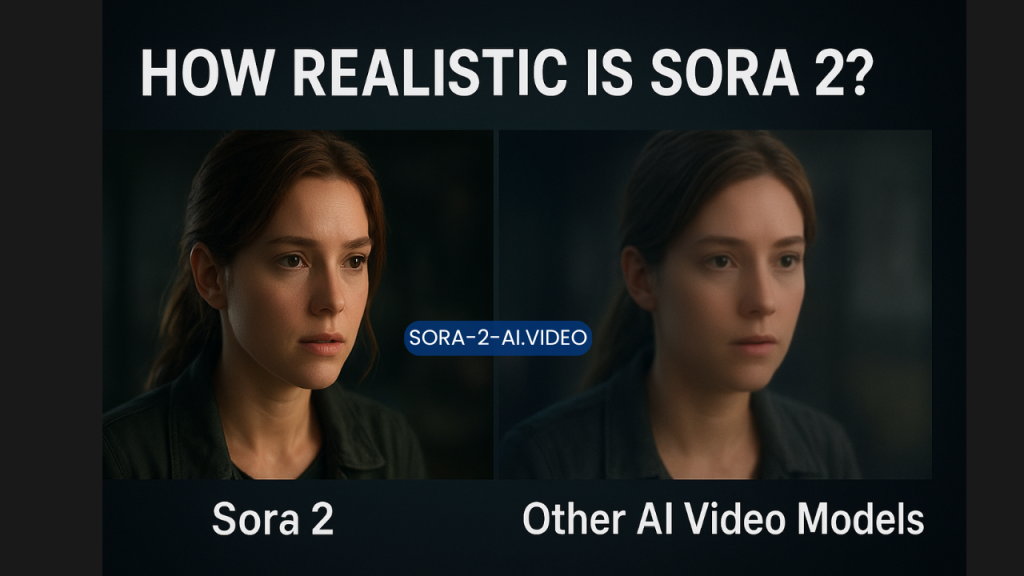

Visual quality can be split into texture detail, lighting accuracy, and photographic artifacts (weird warps, melting fingers, poor hands). Sora 2 produces high-resolution outputs (consumer workflows show 720p–1080p and cinematic framing in many demos) and tends to deliver sharp textures, realistic materials, and plausible lighting transitions across short clips. This is the obvious baseline for “realistic.” OpenAI’s official notes emphasize improved physical accuracy and cinematic outputs in Sora 2’s generation pipeline.

However, Runway’s Gen line — particularly Gen-4 and newer variants — has been engineered specifically for visual fidelity and stylistic control, and its public demos have frequently shown edge-to-edge photorealism with fewer obvious neural artifacts in complex scenes. Runway’s research focus on consistency and cinematic control sometimes gives it an edge in shots that demand precise, continuous photorealism across cuts. In short: Sora 2 is top-tier for short cinematic clips, but Runway (and other specialized systems like Pika’s latest builds) can equal or surpass it in narrow photoreal benchmarks, depending on the scene.

Motion, physics, and object interaction

Where Sora 2 claims a major leap is in physical realism — the model was specifically touted for better handling of motion, collisions, buoyancy, and other causal interactions inside a scene. That matters because many older video models produced visually pleasing frames but failed at simple physics: objects passing through each other, jittering trajectories, or implausible accelerations. Sora 2’s architecture and training choices aim to reduce those errors, and reviewers have observed clips with believable ballistics, natural falls, and more consistent object behavior.

Still, physics is hard. Competitors like Runway have also prioritized dynamics — their later Gen releases emphasize “3D dynamics” and consistent world models that understand how objects move in space. Independent comparisons find that, in many scenes, Sora 2 and Runway produce comparable motion plausibility, while specific failure modes differ: Sora 2 might perform better on multi-object interactions, while Runway sometimes leads on fluid dynamics or fine-grained human motion, depending on the prompt and reference material. The takeaway: both are strong; the winner depends on the type of physics you need.

Temporal consistency: characters, faces, and continuity

One critical marker of realism is whether the same character or object stays consistent over time — same face, same outfit, no shape-shift midway through a scene. This is where many earlier models failed spectacularly. Sora 2 includes multi-shot capabilities and features designed to preserve character identity across shots, reducing flicker and identity drift. Users experimenting with image-to-video prompts report much better character permanence compared to early-generation models.

Runway’s Gen family also emphasizes consistency. Runway’s approach often centers on “locking” character appearance and scene parameters so a model will render the same person across different angles and shots — a feature filmmakers find invaluable. Pika and other consumer-first tools have made large strides too, trading off some absolute photorealism to achieve higher character consistency and stylistic expressiveness. Practically, that means Sora 2 is competitive but not an undisputed leader: depending on whether you prioritize faithful face preservation or cinematic motion, another tool might be preferable.

Audio realism and synchronized dialogue

One of Sora 2’s headline differentiators is native audio generation — not just adding a soundtrack but producing dialogue that aligns with mouth movements and scene timing. That’s huge for perceived realism. Audio that is temporally aligned and contextually appropriate dramatically raises the viewer’s suspension of disbelief, especially for short social-format clips. OpenAI’s documentation and demo material highlight synchronized dialogue and sound effects as a distinguishing feature of Sora 2.

Competitors have historically focused on the visual side and left audio generation to separate tools or post-processing. Runway has explored automated sound design (e.g., soundify research) but integrating native lip-synced dialogue into a single generation pipeline is a newer capability where Sora 2 stands out. That means for use cases where dialogue and timing matter (short narratives, explainer clips, or social videos), Sora 2 will often feel more “complete.”

Controllability and creative workflow

Realism is not only what the model produces automatically but how much control the user has: camera framing, lighting, shot sequencing, and prompt adherence. Sora 2’s multi-shot controls and app-level editing workflow are built for creators who want to combine automated generation with directed composition. The result is a practical hybrid: strong defaults for realism plus granular options when you need them.

Runway remains a powerhouse for controllability, offering modular tools for editing, keyframe-style prompts, and advanced pipelines that integrate into professional post-production. Pika’s strength is speed and ease of use, which sometimes trades off fine-grained control for immediacy and playful outputs. In short: for pro-level control, Runway tends to be the go-to; for integrated audio + video realism with good default controls, Sora 2 is exceptionally well-balanced.

Limitations and failure modes

No model is perfect. Common failure modes persist across the entire class of systems: longer sequences expose drift, complex hands and fingers still produce artifacts at times, and rare-edge physics or intricate text on surfaces remain challenging. Sora 2 reduces but does not eliminate these issues. In addition, models occasionally hallucinate causal relationships (effects preceding causes) or generate uncanny artifacts when pushed to extremes of photorealism. Independent reporting on the field finds these problems cropping up both in Sora 2 and in competing systems.

Ethical and legal constraints also shape what’s “realistic” in practice: watermarking, provenance labels, and content policies may reduce the ability to create perfect fake likenesses of public figures, which in an odd sense makes “realism” both a technical and a policy problem.

Use cases where Sora 2 is best — and where you might choose something else

Choose Sora 2 when:

- You want short cinematic clips with synchronized audio out of the box.

- You need believable multi-object physics without assembling complex pipelines.

- You want a creator-friendly app that balances automatic realism with useful controls.

Choose Runway (or similar) when:

- You need maximum photoreal fidelity across complex, multi-shot productions and pro-style integration with editing tools.

Choose Pika or other nimble tools when:

- Speed, ease of use, and stylized social content matter more than exact photographic realism.

Final assessment: how realistic is Sora 2?

Sora 2 marks a meaningful step forward in the realism of AI-generated video. Its native audio generation, improved physics, and practical multi-shot controls make it feel more “complete” than many predecessors and competitors for short-form cinematic content. In head-to-head comparisons, Sora 2 is consistently among the top performers — it narrows the gap between AI-generated clips and real footage in many standard tests. But realism is nuanced: for narrow photoreal benchmarks or pro post-production pipelines, systems like Runway frequently offer complementary strengths. The practical verdict is that Sora 2 is among the most realistic, most usable video models available today, especially if you value integrated audio and believable physics; yet it is not a categorical, across-the-board replacement for other specialized tools.

What to watch next

Expect continued iteration: better long-form consistency, higher-res outputs, and models that more reliably understand causal chains. Also watch policy and licensing shifts — deals and restrictions will affect what can be generated and how “realistic” content is allowed to look in public platforms. For creators, the pragmatic advice is to test multiple tools and choose one by the clip type: dialogue-heavy social clips (Sora 2), cinematic photorealism and studio pipelines (Runway), or rapid social content (Pika).

If you want, I can convert this into a formatted blog post with images and citations, write a shorter explainer for social media, or draft a comparison table that lists strengths/weaknesses per model and ideal use cases. Which format

Conclusion

Sora 2 represents a major milestone in the evolution of AI-generated video, particularly in how closely it approaches real-world realism across multiple dimensions. Its strengths lie not only in visual fidelity but also in its improved understanding of motion, physics, temporal consistency, and—most notably—native audio generation with synchronized dialogue. These elements combine to create videos that feel more cohesive, immersive, and complete than many earlier AI video outputs.

When compared with other leading AI video models, Sora 2 does not dominate every category outright, but it consistently ranks among the top performers. Competitors like Runway excel in professional-grade controllability and cinematic workflows, while tools such as Pika prioritize speed and creative accessibility. What sets Sora 2 apart is balance: it delivers high realism without requiring complex pipelines, making it especially attractive for creators, marketers, and storytellers who want believable results with minimal friction..

Frequently Asked Questions (FAQs)

1. What makes Sora 2 more realistic than earlier AI video models?

Sora 2 improves realism through better physical understanding, smoother motion, stronger temporal consistency, and native audio generation. Unlike earlier models that focused mainly on visuals, Sora 2 integrates sound, dialogue, and cause-and-effect motion, which significantly enhances perceived realism.

2. Is Sora 2 more realistic than Runway or Pika?

It depends on the use case. Sora 2 excels in creating short, cinematic clips with believable physics and synchronized audio. Runway often performs better in professional workflows that require advanced editing control and long-shot consistency, while Pika is favored for fast, stylized content rather than strict realism.

3. Can Sora 2 generate realistic human characters and dialogue?

Yes, Sora 2 is particularly strong at generating realistic human scenes, including facial expressions, body movement, and lip-synced dialogue. While minor artifacts can still appear, the overall quality is noticeably more natural than most previous-generation AI video models.

4. What are the main limitations of Sora 2?

Sora 2 can still struggle with long-form videos, intricate hand movements, and highly complex scenes involving many interacting elements. Like all AI video models, it may occasionally produce visual inconsistencies or subtle physics errors, especially in extended sequences.

5. Who should use Sora 2?

Sora 2 is ideal for content creators, marketers, educators, and storytellers who want realistic AI-generated video without managing complicated production pipelines. It is especially well-suited for short narratives, social media videos, concept visuals, and dialogue-driven scenes.

If you’d like, I can also rewrite this conclusion and FAQs in a more academic, SEO-optimized, or blog-friendly tone.